Artery Alert

Developed as part of the A.I. minor's "Human Centric A.I. Design" course, this project involved creating two ethically contrasting machine learning applications, each predicting heart disease based on user-provided health information.

📌 Project specifications

- Goal:

-

Create an ethically good and -bad machine learning application using the same dataset.

- Scope:

-

- Both application (good- and bad app) need to be hosted online.

- The good app needs to be reasonably accurate (above 80%)

- The topics privacy, explainability and bias need to be addressed within both applications.

- Create a comprehensive project poster.

- Timeline:

-

10 Weeks

🖥️ Technical Specifications

- Technologies, tools and frameworks used:

-

- Django

- Jupyter notebook

- Pythonanywhere

- Data:

-

Heart Failure Prediction Dataset from Kaggle

- Model architecture:

-

Utilized a decision tree model, prioritizing explainability over raw performance.

🎯 Results

For this project, I decided on building only one django application, which would house both the good- and bad app. The good app was made to adhere to ethical standards, while the bad app was oriented towards profit, divulging minimal information and promoting supplement sales. This project was a collaborative effort which involded my partner who created the style template, designed the logo and helped with the ideation of the project, whilst I took charge of application development and poster creation.

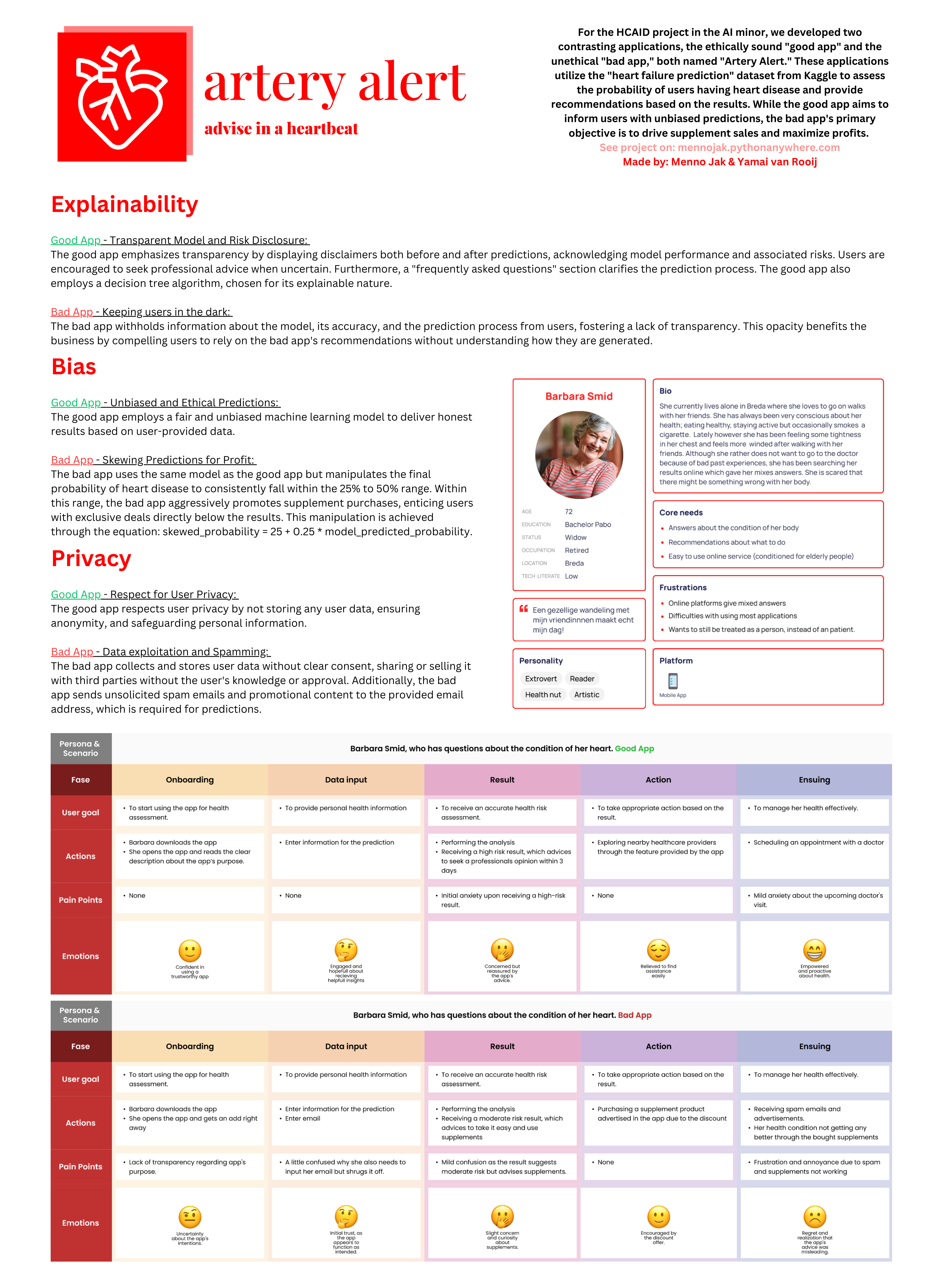

The pictures above show the homescreen of the application and the created poster for this project, which goes into detail about how the good- and bad app do or do not meet ethical requirements on the areas of explainability, bias and privacy. It also takes a look at the human factor with a persona and 2 user journey's, one taken through the good app and the other through the bad app.

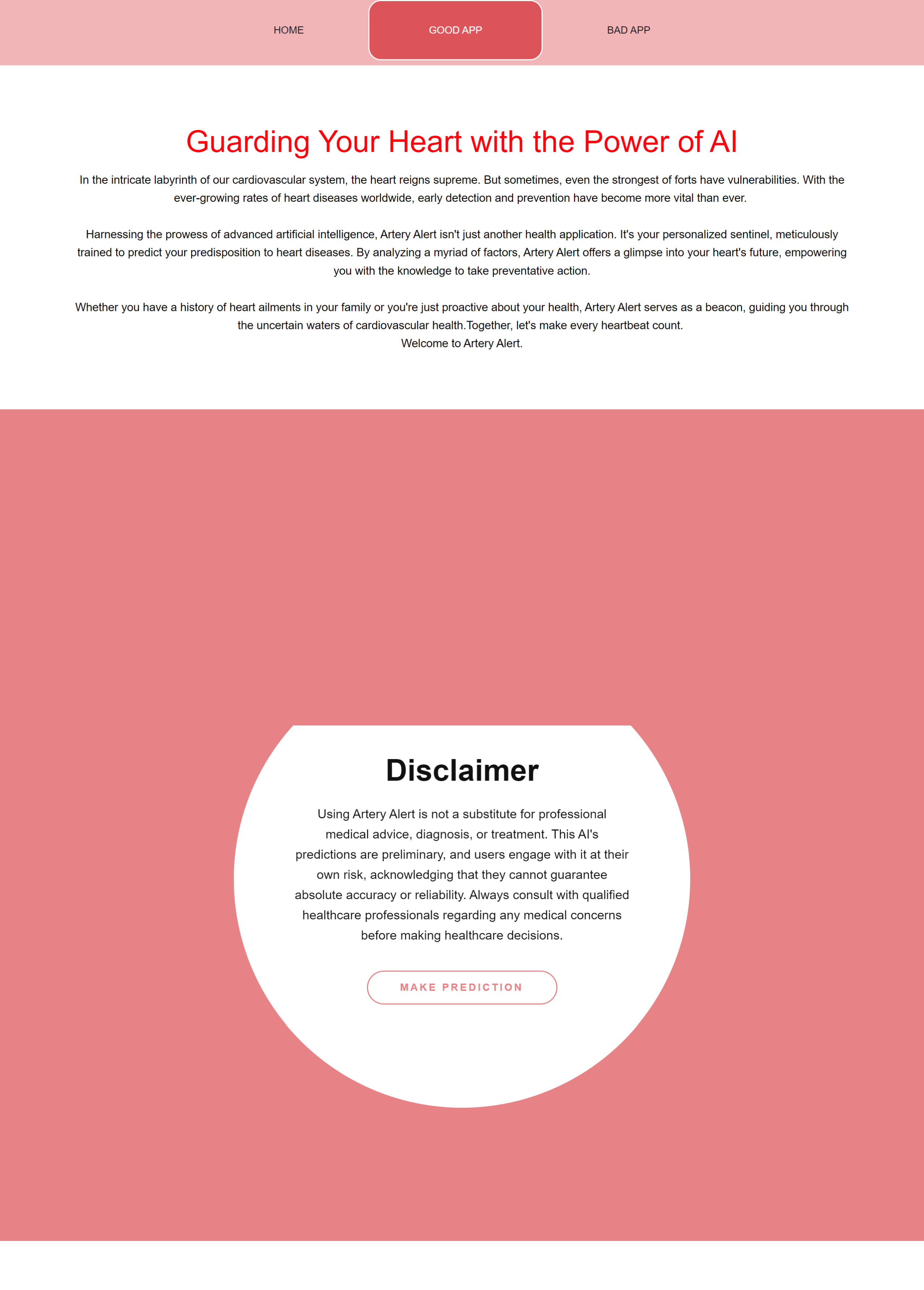

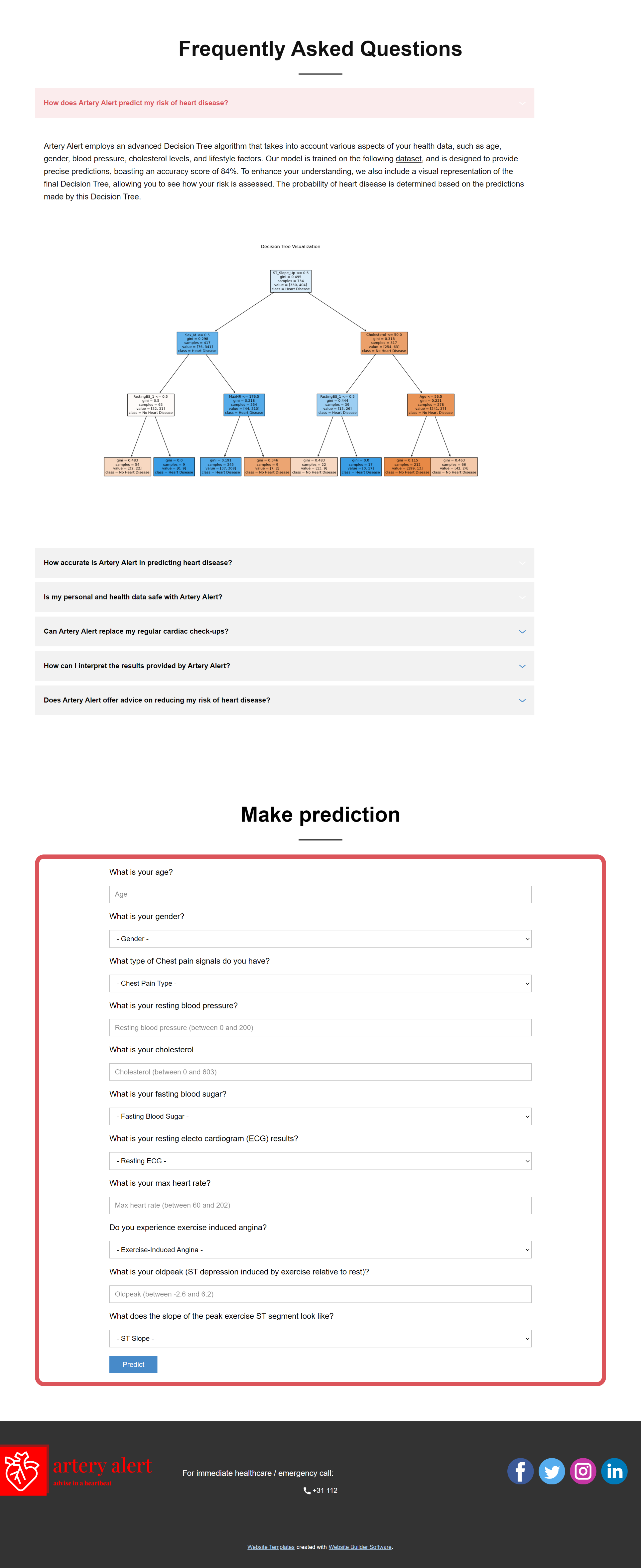

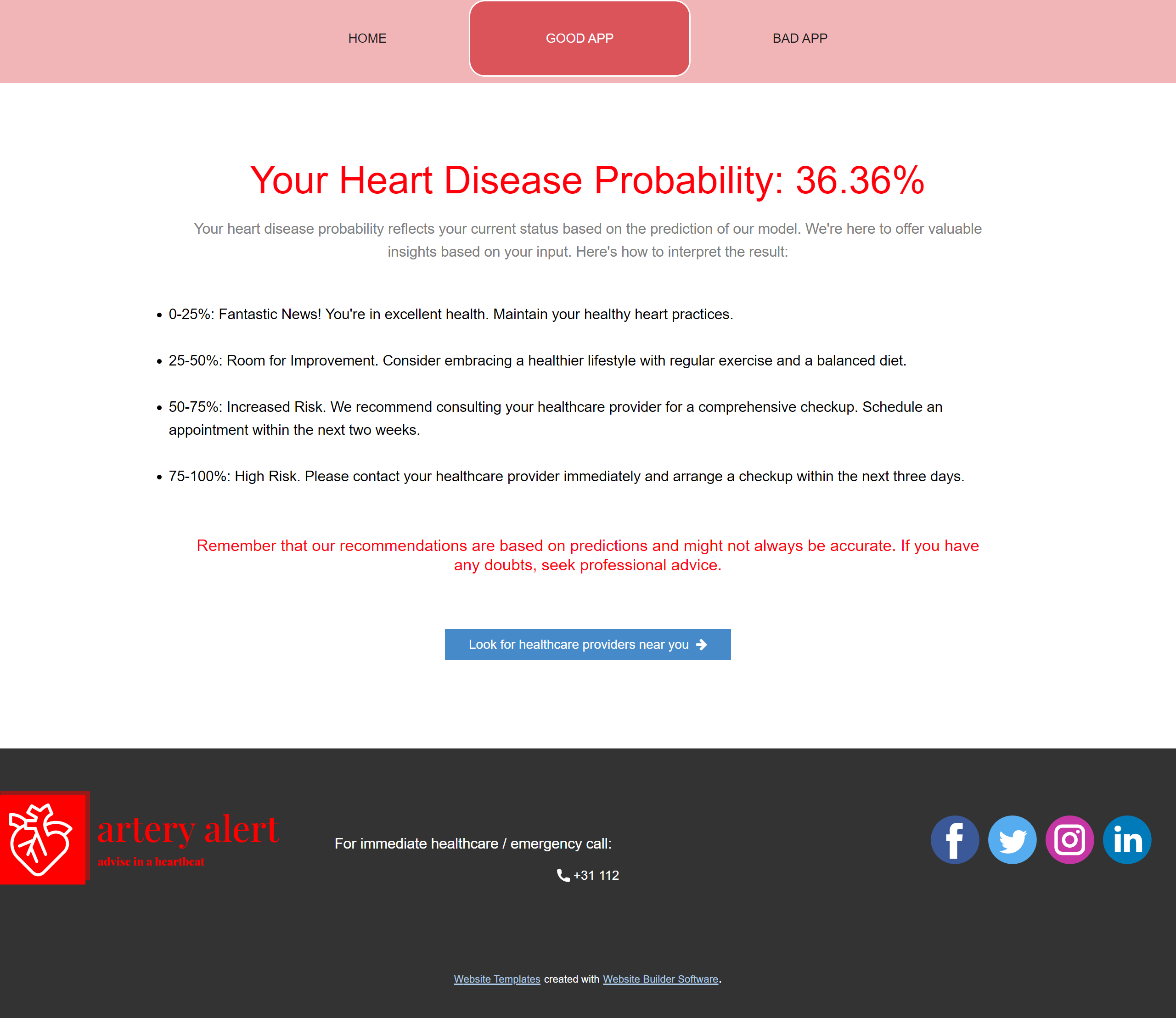

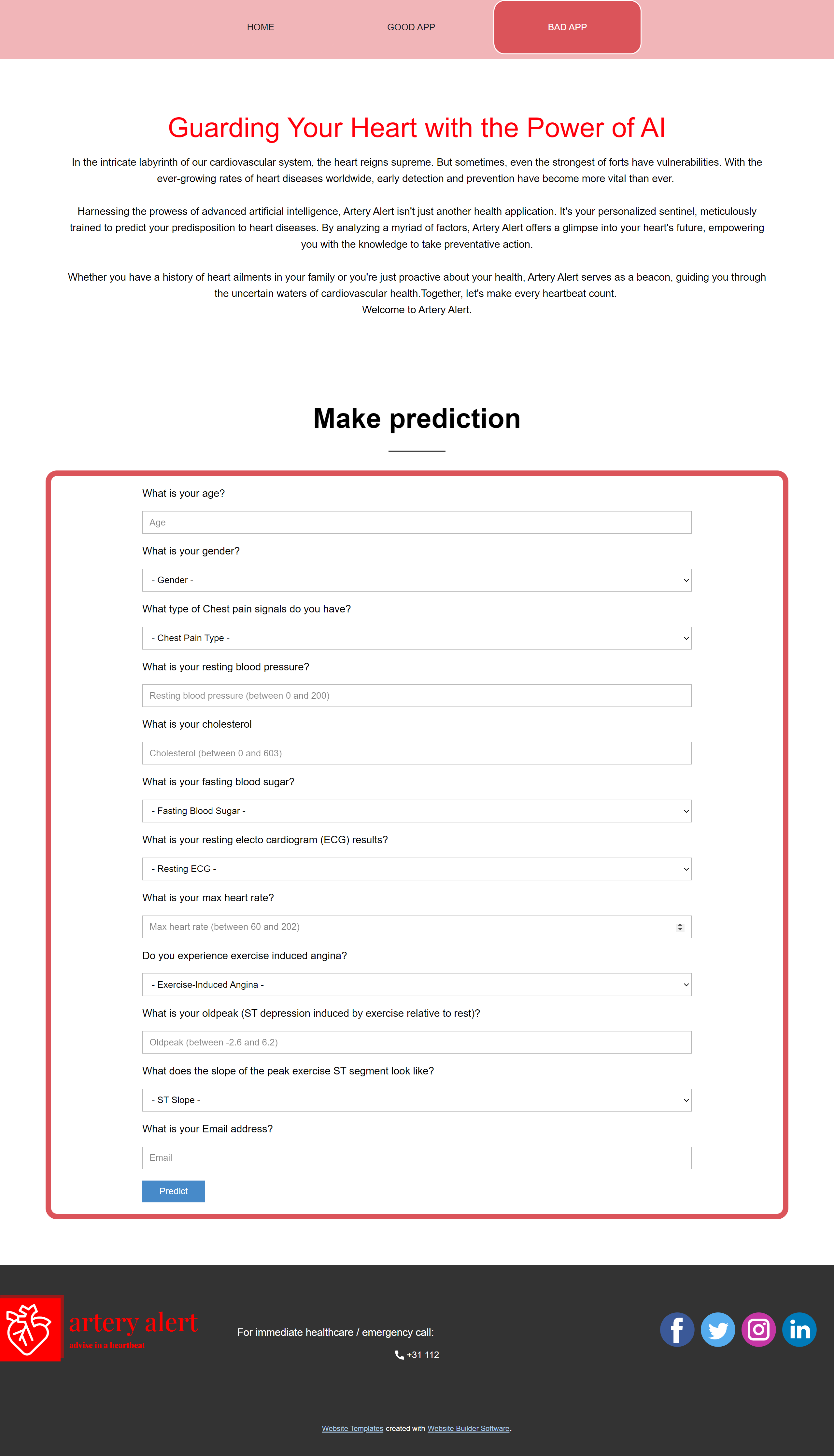

The pictures above show the good- and bad app in their entirety. The first and second picture show the start page of the good app, featuring a detailed homepage, a disclaimer, a FAQ section, and the form which (when filled in) will predict your results. The third picture shows the results page of the good app, which emphasizes that these results should only be takes as advice and not fact.

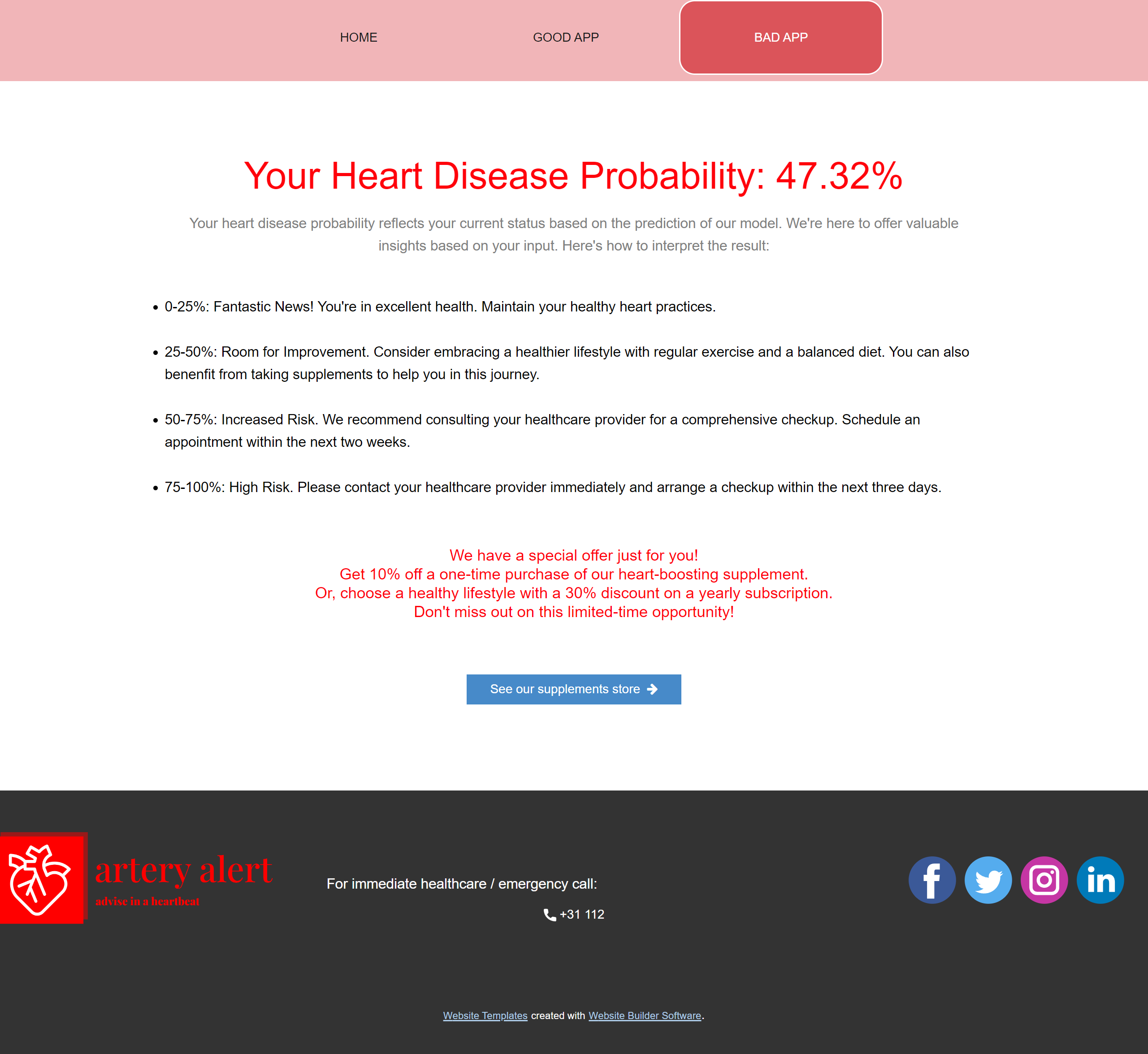

The fourth and fifth picture show the bad app. In contrast to the good app, the bad app omitted the disclaimer and FAQ sections, as well as add a question to the form which asks for the user's email. The results page strategically scaled results to always suggest supplement purchases, accompanied by exclusive offers. This works by scaling the user's result to always fall between the 25 to 50% range, where it is advised to buy supplements.

💭 Reflection

Overall I found this project to be very educational. On the technical front it wasn't the most challenging, but thinking about the ethical side of machine learning and making applications that oppose eachother on the areas of explainability, bias and privacy made for a very interesting project.